What is Rubric Configuration?

Rubric Configuration allows you to define exactly when an evaluation should be marked as successful or failed. Instead of using default logic, you can create custom rules based on multiple metrics, set specific thresholds, and combine conditions with AND/OR logic.Why Use Rubric Configuration?

- Define success criteria tailored to your use case

- Combine multiple metrics to determine overall success

- Set specific thresholds for different metric types

- Automatically pass/fail evaluations based on your rules

Navigation

To configure a rubric for your project:- Click on the Rubric tab

Configuring Your Rubric

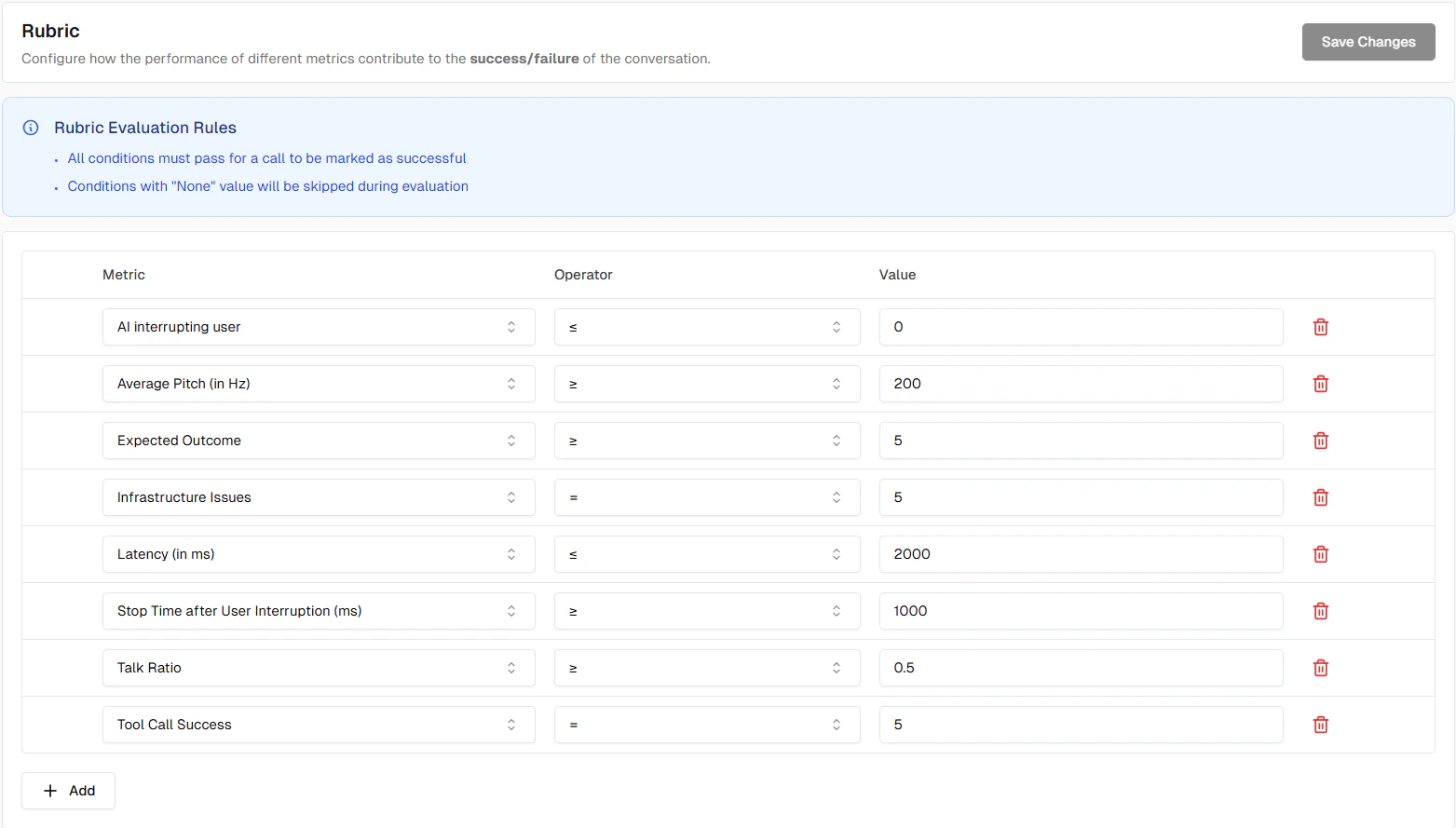

The rubric configuration page allows you to define rules for each metric in your project.

Components

Each rule consists of:- Metric: Select which metric to evaluate

- Operator: The comparison operator (e.g., greater than or equal, less than or equal, equals, in)

- Value: The threshold or expected value

Common Examples

Example 1: All Boolean Metrics Must Pass

For evaluations to succeed, all binary metrics must have a score of 5 (success):- Rule 1: Workflow Adherence score greater than or equal to 5

- Rule 2: Task Completion score greater than or equal to 5

Example 2: Acceptable Latency Threshold

Require low latency in addition to workflow adherence:- Rule 1: Workflow Adherence score greater than or equal to 5

- Rule 2: Latency score less than or equal to 2000

Auto-Added Metrics

Projects

For Projects, all default predefined metrics are automatically added to your rubric with appropriate success conditions. This gives you a ready-to-use rubric configuration from day one.New Boolean Metrics

When you create new boolean metrics (Binary Qualitative or Binary Workflow Adherence), they are automatically added to your rubric with a default success condition:- Operator: greater than or equal

- Value: 5

Tips

Metric Skipping

The rubric automatically skips certain metrics:- Metrics with null/empty values

- Metrics marked as irrelevant

Next Steps

Metrics Overview

Learn about different metric types

Test Profile

Configure test identities